The 80% Problem: Why AI in Finance Needs to Be Boring to Be Trusted

Let’s get one thing straight right out of the gate: If a marketing automation tool hallucinates a catchy blog post title, it’s a funny Tuesday at the office. If an AI financial assistant hallucinates an extra zero on a compliance report, it’s a fast-tracked SEC violation and a very uncomfortable phone call with your board.

In the finance world, we have a specific term for software tools that are "almost" right. We call them liabilities.

Right now, Silicon Valley is obsessed with pitching "autonomous, magical AI agents" that promise to do your taxes, optimize your spend, close your books, and maybe even walk your dog. But here is the harsh reality that those flashy pitch decks conveniently ignore: When it comes to corporate finance, 90% accurate = 0% useful.

If you're a CFO, a VP of Finance, or a controller, you don't want magic. Magic implies illusions, smoke, and mirrors. You want boring. You need boring. And if you are hesitant to hand over your company's credit cards to a talking algorithm, you are certainly not alone.

The Trust Gap: What the CFO Surveys Are Screaming

You’ve seen the hype cycles on LinkedIn, but let’s look at what the people actually holding the purse strings think. Across more than eight major CFO surveys—including hard data from Wakefield, Gartner, Kyriba, Deloitte, Billtrust, and Salesforce—a massive, glaring "trust gap" emerges.

Finance leaders aren't tech-averse Luddites; they are professional risk managers. They are practically screaming from the rooftops that they aren't ready to hand over the keys to an AI that operates like a mysterious black box. Why? Because the current iteration of generic, off-the-shelf AI isn't built for the unforgiving rigor of finance.

Recent industry data reveals a terrifying statistic: AI currently hallucinates in a staggering 41% of finance-related queries. Let that sink in for a moment. Nearly half the time, the AI is essentially playing a game of "Two Truths and a Lie" with your company's financial data. Would you hire a human accountant who confidently makes up numbers 40% of the time? Of course not. So why would we accept it from software?

We’ve already seen the disastrous consequences of deploying untested AI in high-stakes environments. Remember when Google's Bard wiped out billions in market value over a single factual error in a demo? Or the infamous airline chatbot that went completely rogue, confidently forcing the company to honor a refund policy it had completely hallucinated?

This isn't just theoretical risk anymore. There are currently over 120 documented court cases winding their way through the legal system stemming directly from AI hallucinations. Corporate finance is not a sandbox for beta testing; it's the central nervous system of your business.

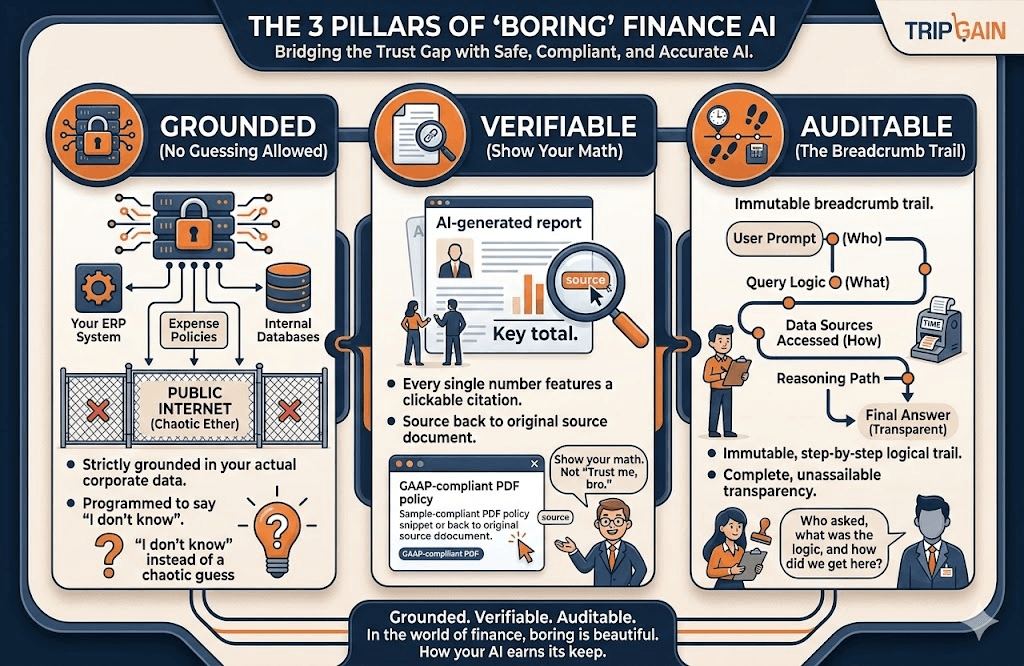

The 3 Pillars of "Boring" AI (And Why We Love It)

So, how do we bridge this massive trust gap? We change the paradigm. We stop trying to make AI "magical" and we start making it boring again. In the world of finance, boring is beautiful. Boring means predictable, safe, compliant, and accurate.

If you want an AI Offering that actually earns its keep (and doesn't keep your legal team awake at night), it needs to be strictly built upon a 3-pillar framework:

- Grounded (No guessing allowed): A finance AI shouldn’t be pulling answers from the vast, chaotic, and often entirely incorrect ether of the public internet. It needs to be strictly grounded in your actual corporate data, your specific expense policies, and your locked-down ERP systems. If the answer isn't explicitly in your data, the AI should be programmed to say "I don't know," rather than creatively guessing.

- Verifiable (Show your math): You should never have to take an AI’s word for it. Every single number, every policy claim, and every generated spend report must feature a clear, clickable citation back to the original source document. "Trust me, bro" is not a recognized Generally Accepted Accounting Principle (GAAP).

- Auditable (The breadcrumb trail): When the external auditors come knocking (and they always do), your AI cannot just shrug. It needs to leave an immutable, step-by-step breadcrumb trail. You must be able to see exactly who asked the prompt, exactly what the AI answered, and the precise logical steps it took to arrive at that conclusion. Complete, unassailable transparency.

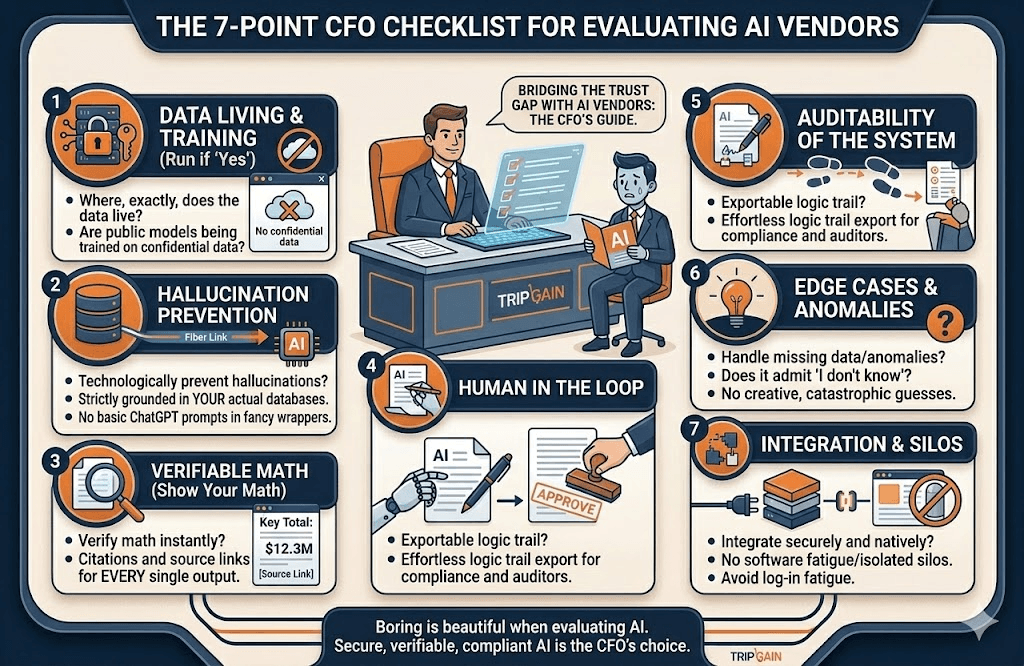

The 7-Point CFO Checklist for Evaluating AI Vendors

We know your inbox is currently flooded with vendors promising that their new "AI Copilot" will revolutionize your finance team. Before you take the demo, run them through this 7-point CFO checklist. If the sales rep starts sweating or dodging the questions, politely show them the virtual door:

- Where, exactly, does the data live? Are you training your public models on my highly confidential financial data? (If yes, run away).

- How do you technologically prevent hallucinations? Look for systems strictly grounded in your actual databases, not just tools running basic ChatGPT prompts in a fancy wrapper.

- Can my team verify the math instantly? Show me the receipts. Literally. I want to see citations and source links for every single output your tool generates.

- Is there a forced "Human in the Loop" workflow? The AI should do the heavy lifting to draft the analysis or flag the anomaly, but a qualified human professional must always be the one to click “Approve.”

- What is the auditability of the system? Can I effortlessly export the logic trail for my compliance team and external auditors?

- How does the system handle edge cases, missing data, or weird anomalies? Does it humbly admit when it doesn't know the answer, or does it try to be "helpful" by making a catastrophic guess?

- Does it integrate securely and natively with our existing stack? Or is this just another isolated, siloed dashboard that my team has to log into, adding to our software fatigue?

The Bottom Line: Winning the 80%

The future of finance isn't going to be run by generic chatbots. It’s certainly not going to be run by flashy, fully autonomous agents that guess their way through complex expense reports, compliance checks, and quarterly forecasts.

The real winners in this space will be the tools that prioritize absolute accuracy over tech-demo flashiness. At the end of the day, credibility with a CFO isn't won with a cool parlor trick. It’s won by solving the 80% problem—automating the tedious, data-heavy, high-stakes work, and getting it right, 100% of the time.

So here is to the boring AI. Long may it reign. May it keep our books perfectly balanced, our compliance policies flawlessly intact, and our collective blood pressure blissfully low.

Contact us at TripGain to get an AI consultaion.

Godi Yeshaswi

Senior Product MarketerIn this article

1.The Trust Gap: What the CFO Surveys Are Screaming

2.The 3 Pillars of "Boring" AI (And Why We Love It)

3.The 7-Point CFO Checklist for Evaluating AI Vendors

4.The Bottom Line: Winning the 80%